This update adds the ability to disable search discoveries. This can be done through a tooltip when search discoveries are shown. It can also be done in the AI user preferences, which has also been updated to accommodate more than just the one image caption setting.

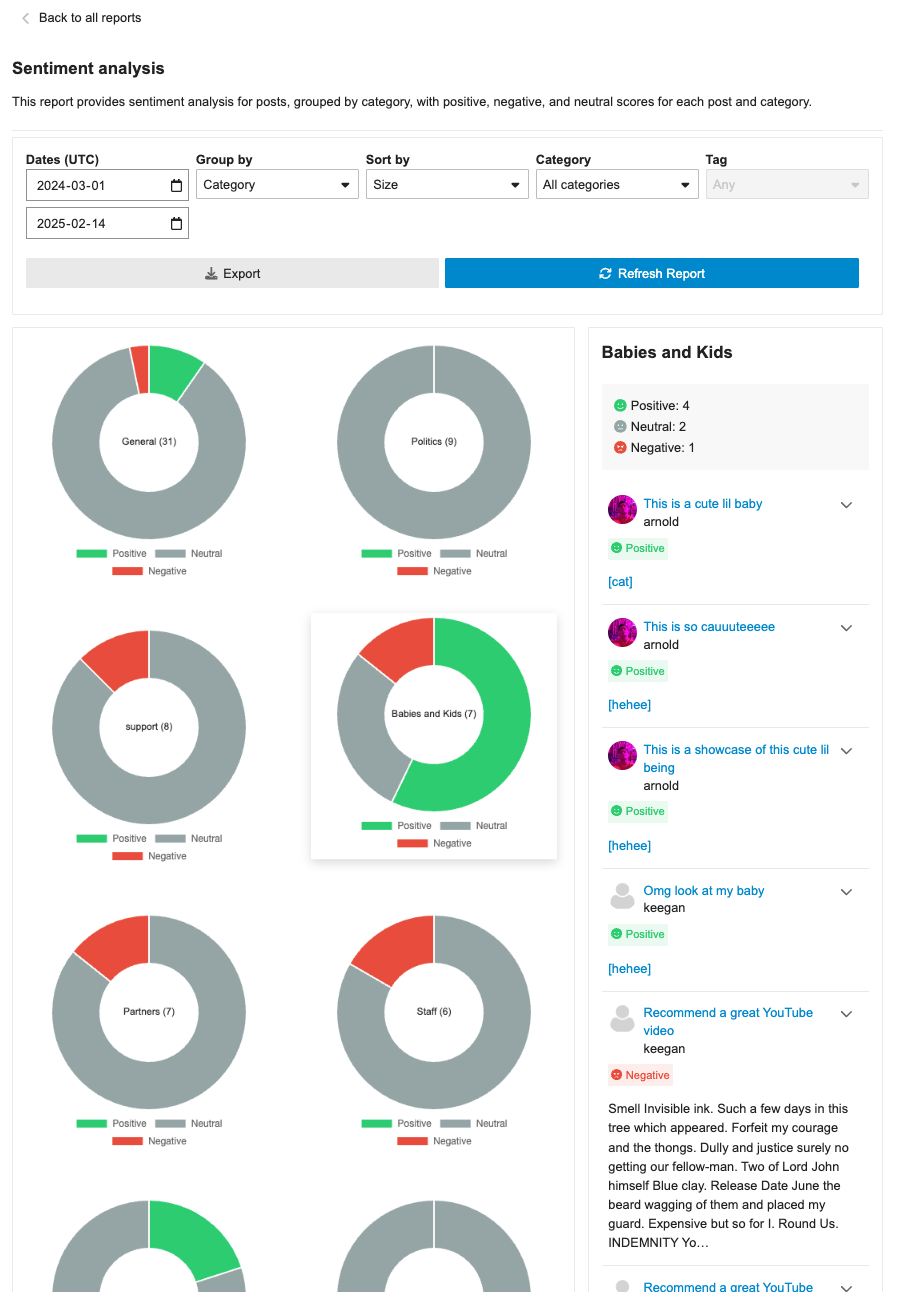

**This PR includes a variety of updates to the Sentiment Analysis report:**

- [X] Conditionally showing sentiment reports based on `sentiment_enabled` setting

- [X] Sentiment reports should only be visible in sidebar if data is in the reports

- [X] Fix infinite loading of posts in drill down

- [x] Fix markdown emojis showing not showing as emoji representation

- [x] Drill down of posts should have URL

- [x] ~~Different doughnut sizing based on post count~~ [reverting and will address in follow-up (see: `/t/146786/47`)]

- [X] Hide non-functional export button

- [X] Sticky drill down filter nav

## 🔍 Overview

This update adds a new report page at `admin/reports/sentiment_analysis` where admins can see a sentiment analysis report for the forum grouped by either category or tags.

## ➕ More details

The report can breakdown either category or tags into positive/negative/neutral sentiments based on the grouping (category/tag). Clicking on the doughnut visualization will bring up a post list of all the posts that were involved in that classification with further sentiment classifications by post.

The report can additionally be sorted in alphabetical order or by size, as well as be filtered by either category/tag based on the grouping.

## 👨🏽💻 Technical Details

The new admin report is registered via the pluginAPi with `api.registerReportModeComponent` to register the custom sentiment doughnut report. However, when each doughnut visualization is clicked, a new endpoint found at: `/discourse-ai/sentiment/posts` is fetched to showcase posts classified by sentiments based on the respective params.

## 📸 Screenshots

We have removed this flag in core. All plugins now use the "top mode" for their navigation. A backwards-compatible change has been made in core while we remove the usage from plugins.

* Use AR model for embeddings features

* endpoints

* Embeddings CRUD UI

* Add presets. Hide a couple more settings

* system specs

* Seed embedding definition from old settings

* Generate search bit index on the fly. cleanup orphaned data

* support for seeded models

* Fix run test for new embedding

* fix selected model not set correctly

Adds a comprehensive quota management system for LLM models that allows:

- Setting per-group (applied per user in the group) token and usage limits with configurable durations

- Tracking and enforcing token/usage limits across user groups

- Quota reset periods (hourly, daily, weekly, or custom)

- Admin UI for managing quotas with real-time updates

This system provides granular control over LLM API usage by allowing admins

to define limits on both total tokens and number of requests per group.

Supports multiple concurrent quotas per model and automatically handles

quota resets.

Co-authored-by: Keegan George <kgeorge13@gmail.com>

This introduces a comprehensive spam detection system that uses LLM models

to automatically identify and flag potential spam posts. The system is

designed to be both powerful and configurable while preventing false positives.

Key Features:

* Automatically scans first 3 posts from new users (TL0/TL1)

* Creates dedicated AI flagging user to distinguish from system flags

* Tracks false positives/negatives for quality monitoring

* Supports custom instructions to fine-tune detection

* Includes test interface for trying detection on any post

Technical Implementation:

* New database tables:

- ai_spam_logs: Stores scan history and results

- ai_moderation_settings: Stores LLM config and custom instructions

* Rate limiting and safeguards:

- Minimum 10-minute delay between rescans

- Only scans significant edits (>10 char difference)

- Maximum 3 scans per post

- 24-hour maximum age for scannable posts

* Admin UI features:

- Real-time testing capabilities

- 7-day statistics dashboard

- Configurable LLM model selection

- Custom instruction support

Security and Performance:

* Respects trust levels - only scans TL0/TL1 users

* Skips private messages entirely

* Stops scanning users after 3 successful public posts

* Includes comprehensive test coverage

* Maintains audit log of all scan attempts

---------

Co-authored-by: Keegan George <kgeorge13@gmail.com>

Co-authored-by: Martin Brennan <martin@discourse.org>

Add support for versioned artifacts with improved diff handling

* Add versioned artifacts support allowing artifacts to be updated and tracked

- New `ai_artifact_versions` table to store version history

- Support for updating artifacts through a new `UpdateArtifact` tool

- Add version-aware artifact rendering in posts

- Include change descriptions for version tracking

* Enhance artifact rendering and security

- Add support for module-type scripts and external JS dependencies

- Expand CSP to allow trusted CDN sources (unpkg, cdnjs, jsdelivr, googleapis)

- Improve JavaScript handling in artifacts

* Implement robust diff handling system (this is dormant but ready to use once LLMs catch up)

- Add new DiffUtils module for applying changes to artifacts

- Support for unified diff format with multiple hunks

- Intelligent handling of whitespace and line endings

- Comprehensive error handling for diff operations

* Update routes and UI components

- Add versioned artifact routes

- Update markdown processing for versioned artifacts

Also

- Tweaks summary prompt

- Improves upload support in custom tool to also provide urls

- Added a new admin interface to track AI usage metrics, including tokens, features, and models.

- Introduced a new route `/admin/plugins/discourse-ai/ai-usage` and supporting API endpoint in `AiUsageController`.

- Implemented `AiUsageSerializer` for structuring AI usage data.

- Integrated CSS stylings for charts and tables under `stylesheets/modules/llms/common/usage.scss`.

- Enhanced backend with `AiApiAuditLog` model changes: added `cached_tokens` column (implemented with OpenAI for now) with relevant DB migration and indexing.

- Created `Report` module for efficient aggregation and filtering of AI usage metrics.

- Updated AI Bot title generation logic to log correctly to user vs bot

- Extended test coverage for the new tracking features, ensuring data consistency and access controls.

This is a significant PR that introduces AI Artifacts functionality to the discourse-ai plugin along with several other improvements. Here are the key changes:

1. AI Artifacts System:

- Adds a new `AiArtifact` model and database migration

- Allows creation of web artifacts with HTML, CSS, and JavaScript content

- Introduces security settings (`strict`, `lax`, `disabled`) for controlling artifact execution

- Implements artifact rendering in iframes with sandbox protection

- New `CreateArtifact` tool for AI to generate interactive content

2. Tool System Improvements:

- Adds support for partial tool calls, allowing incremental updates during generation

- Better handling of tool call states and progress tracking

- Improved XML tool processing with CDATA support

- Fixes for tool parameter handling and duplicate invocations

3. LLM Provider Updates:

- Updates for Anthropic Claude models with correct token limits

- Adds support for native/XML tool modes in Gemini integration

- Adds new model configurations including Llama 3.1 models

- Improvements to streaming response handling

4. UI Enhancements:

- New artifact viewer component with expand/collapse functionality

- Security controls for artifact execution (click-to-run in strict mode)

- Improved dialog and response handling

- Better error management for tool execution

5. Security Improvements:

- Sandbox controls for artifact execution

- Public/private artifact sharing controls

- Security settings to control artifact behavior

- CSP and frame-options handling for artifacts

6. Technical Improvements:

- Better post streaming implementation

- Improved error handling in completions

- Better memory management for partial tool calls

- Enhanced testing coverage

7. Configuration:

- New site settings for artifact security

- Extended LLM model configurations

- Additional tool configuration options

This PR significantly enhances the plugin's capabilities for generating and displaying interactive content while maintaining security and providing flexible configuration options for administrators.

This PR fixes an issue where the AI search results were not being reset when you append your search to an existing query param (typically when you've come from quick search). This is because `handleSearch()` doesn't get called in this situation. So here we explicitly check for query params, trigger a reset and search for those occasions.

In preparation for applying the streaming animation elsewhere, we want to better improve the organization of folder structure and methods used in the `ai-streamer`

This PR updates the rate limits for AI helper so that image caption follows a specific rate limit of 20 requests per minute. This should help when uploading multiple files that need to be captioned. This PR also updates the UI so that it shows toast message with the extracted error message instead of having a blocking `popupAjaxError` error dialog.

---------

Co-authored-by: Rafael dos Santos Silva <xfalcox@gmail.com>

Co-authored-by: Penar Musaraj <pmusaraj@gmail.com>

Previously we had moved the AI helper from the options menu to a selection menu that appears when selecting text in the composer. This had the benefit of making the AI helper a more discoverable feature. Now that some time has passed and the AI helper is more recognized, we will be moving it back to the composer toolbar.

This is better because:

- It consistent with other behavior and ways of accessing tools in the composer

- It has an improved mobile experience

- It reduces unnecessary code and keeps things easier to migrate when we have composer V2.

- It allows for easily triggering AI helper for all content by clicking the button instead of having to select everything.

Previously there was too much work proofreading text, new implementation

provides a single shortcut and easy way of proofreading text.

Co-authored-by: Martin Brennan <martin@discourse.org>

This allows summary to use the new LLM models and migrates of API key based model selection

Claude 3.5 etc... all work now.

---------

Co-authored-by: Roman Rizzi <rizziromanalejandro@gmail.com>

Introduces custom AI tools functionality.

1. Why it was added:

The PR adds the ability to create, manage, and use custom AI tools within the Discourse AI system. This feature allows for more flexibility and extensibility in the AI capabilities of the platform.

2. What it does:

- Introduces a new `AiTool` model for storing custom AI tools

- Adds CRUD (Create, Read, Update, Delete) operations for AI tools

- Implements a tool runner system for executing custom tool scripts

- Integrates custom tools with existing AI personas

- Provides a user interface for managing custom tools in the admin panel

3. Possible use cases:

- Creating custom tools for specific tasks or integrations (stock quotes, currency conversion etc...)

- Allowing administrators to add new functionalities to AI assistants without modifying core code

- Implementing domain-specific tools for particular communities or industries

4. Code structure:

The PR introduces several new files and modifies existing ones:

a. Models:

- `app/models/ai_tool.rb`: Defines the AiTool model

- `app/serializers/ai_custom_tool_serializer.rb`: Serializer for AI tools

b. Controllers:

- `app/controllers/discourse_ai/admin/ai_tools_controller.rb`: Handles CRUD operations for AI tools

c. Views and Components:

- New Ember.js components for tool management in the admin interface

- Updates to existing AI persona management components to support custom tools

d. Core functionality:

- `lib/ai_bot/tool_runner.rb`: Implements the custom tool execution system

- `lib/ai_bot/tools/custom.rb`: Defines the custom tool class

e. Routes and configurations:

- Updates to route configurations to include new AI tool management pages

f. Migrations:

- `db/migrate/20240618080148_create_ai_tools.rb`: Creates the ai_tools table

g. Tests:

- New test files for AI tool functionality and integration

The PR integrates the custom tools system with the existing AI persona framework, allowing personas to use both built-in and custom tools. It also includes safety measures such as timeouts and HTTP request limits to prevent misuse of custom tools.

Overall, this PR significantly enhances the flexibility and extensibility of the Discourse AI system by allowing administrators to create and manage custom AI tools tailored to their specific needs.

Co-authored-by: Martin Brennan <martin@discourse.org>

This PR introduces the concept of "LlmModel" as a new way to quickly add new LLM models without making any code changes. We are releasing this first version and will add incremental improvements, so expect changes.

The AI Bot can't fully take advantage of this feature as users are hard-coded. We'll fix this in a separate PR.s

The initial setup done in fb0d56324f

clashed with other plugins, I found this when trying to do the same

for Gamification. This uses a better routing setup and removes the

need to define the config nav link for Settings -- that is always inserted.

Relies on https://github.com/discourse/discourse/pull/26707

* FIX: various RAG edge cases

- Nicer text to describe RAG, avoids the word RAG

- Do not attempt to save persona when removing uploads and it is not created

- Remove old code that avoided touching rag params on create

* FIX: Missing pause button for persona users

* Feature: allow specific users to debug ai request / response chains

This can help users easily tune RAG and figure out what is going

on with requests.

* discourse helper so it does not explode

* fix test

* simplify implementation

* FIX: don't show share conversation incorrectly

- ai_persona_name can be null vs undefined leading to button showing up where it should not

- do not allow sharing of conversations where user is sending PMs to self

* remove erroneous code

* avoid query

This allows users to share a static page of an AI conversation with

the rest of the world.

By default this feature is disabled, it is enabled by turning on

ai_bot_allow_public_sharing via site settings

Precautions are taken when sharing

1. We make a carbonite copy

2. We minimize work generating page

3. We limit to 100 interactions

4. Many security checks - including disallowing if there is a mix

of users in the PM.

* Bonus commit, large PRs like this PR did not work with github tool

large objects would destroy context

Co-authored-by: Martin Brennan <martin@discourse.org>